This term we’ve been running our Visualisation module as part of the CASA MRes in Advanced Spatial Analysis and Visualisation. The flavour of this module is what you’d expect – finding interesting ways to communicate complex spatio-temporal data through static, animated and interactive tools. I teach every other week, focussing on the use of Processing to programmatically represent data; 3D design whiz and course director Andy Hudson-Smith tends to work with ArcGIS, Lumion and other 3D tools.

CASA student Fabian Neuhaus‘ twitter maps have had quite an impact in the past – showing patterns of geographical twitter usage around London. We challenged our students to take a sample of the same data (collected by Fabian with Steve Gray’s big data tools) and visualise it. The dataset included the date and time of the tweet, its location (only geotagged tweets were considered), and other information like the username, what platform they tweeted from, and language. Here are some examples of what they came up with…

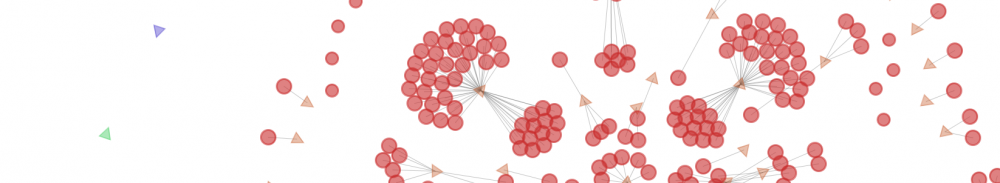

This was my initial (quick and dirty) stab at visualising the data:

One criticism of my initial attempt is that it lacks geographical markers – Alistair Leak tackled that problem by introducing a map. He chose not to blend time or spatial aspects, and with the underlying map this gives perhaps the most accurate representation of the data. The counter to that is that it contains a lot of visual information for the viewer to take in.

Ian Morton took an intermediate approach; a skeletal geographical boundary provides reference points for the viewer. Each tweet persists in time, shrinking and darkening over successive frames. This is a simple and effective visual grammar to provide some “history” or continuity to the vis, whilst retaining a focus on the most recent events.

Robin Edwards and Martin Dittus took a 3D approach, binning over a geographical grid in a KDE-like approach. These elegant 3D visualisations have both considered the problem of interpolation – how to move from one data state to the next. Robin has approached that problem by the bars instantly moving to the current data point, and then (if subsequent data at that point is zero), fading down to zero gradually (like an old-school “graphic equaliser”). Martin has written a smooth transition between subsequent data points – so the bars move smoothly *towards* the latest point. These interpolations enhance the polish of a vis and provide a sense of continuity in a noisy or discontinuous dataset. Martin also added a rich functionality for filtering the tweets by metadata (language, twitter platform, etc) – giving the user of the interactive app control over their view of the data.

Jack Harrison decided to dispense with space, and treat Processing as a component in a more complex workflow. He analysed temporal patterns in R and output the result to Processing to create a “clock”. By saving this as a PDF, he was able to import it into illustrator, allowing him to add the colour scheme and text and create this wonderfully Art Decon rank-clock like vis.

This is my take on the data – I’ll blog about it in more detail, but it’s essentially a Gaussian KDE with some transparency to give smooth blending between different time points as well as spatial blending. As I didn’t give the students an opportunity to feedback on my offering (after we gave significant feedback on theirs) I’m sure they will express their opinions below the line…

Impressive… great work everyone! I’m a particular fan of the grid-based extrusion approach for density mapping, and use this a lot for urban form maps. To see this visualisation style animated for temporal data is very cool.

Pingback: Sociable Physics: Twitter data – visualised by our MRes students « Things I grab, motley collection